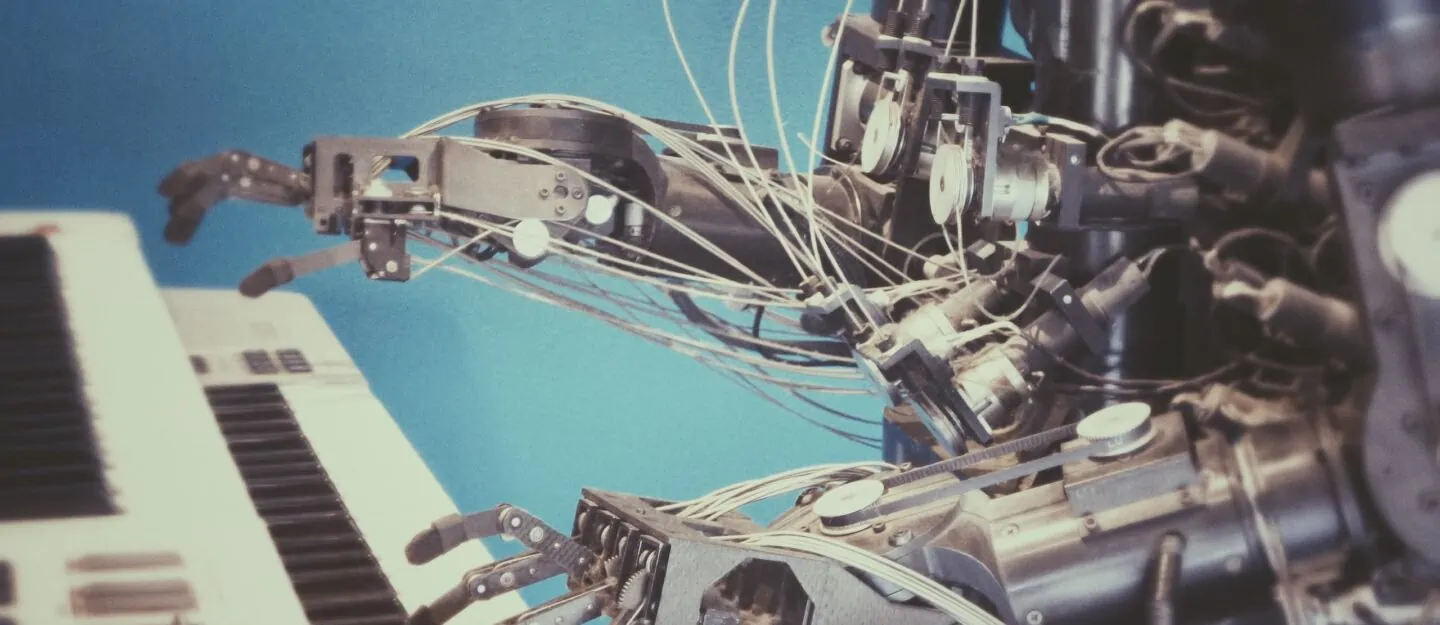

AI is becoming a key factor in the work environment

Digital transformation, automation, process optimization, technology tools to support workflows……all these are buzzwords that we are encountering more and more often. The year 2020, with its new, major challenges, gave these topics a real boost. The Corona pandemic significantly accelerated the digitalization of the economy. Home office became the new standard practically overnight; conferences and events were replaced by virtual experiences. Customers were increasingly buying online and requesting information via digital channels and social media.

So today, work is all about supporting and optimizing workflows and processes. Digital transformation, or rather the changed and optimized ways of working that go hand-in-hand with it, are the key to this. So anyone who has not yet taken action should take a big step toward digitization.

The fact is that companies that have already pushed ahead with digitization and “new work” in recent years are at an advantage. Now, it is a matter of using the changes or challenges as an opportunity.

With the pandemic as a backdrop, there seems to be a consensus that technology is indispensable for mastering crisis and emerging from it with as little damage as possible.

In this context, the topic of artificial intelligence (AI) is increasingly mentioned.

AI and ML are about competencies

Closely related to artificial intelligence is machine learning (ML). The terms AI and ML are often mistakenly confused or equated. However, machine learning is not the same as artificial intelligence.

Artificial intelligence describes applications or technologies in which machines perform human-like intelligence services, such as learning or assessment. It is, therefore, a matter of competencies, or imitating competencies, that were originally and previously only possessed by humans. This also includes language and strategic thinking skills.

Often it is primarily about operational questions when talking about AI. Just as there is no “one intelligence,” there is also no “one artificial intelligence.” Strictly speaking, therefore, using the term “AI methods” would probably make more sense, or even be more correct, but obviously AI as a term is simply perceived as “nicer”.

Machine learning is only one part of artificial intelligence, albeit an important one, and describes the generation of knowledge from experience. In simple terms, this technology teaches computers to learn from data, applications and feedback on decisions; thus computers handle tasks ever more satisfactorily. The algorithms used in this process can recognize and derive patterns from unstructured data, such as text or images, and make decisions independently.

Self-learning algorithms, however, belong more to the realm of myths. It is not enough to simply "feed algorithms with data" and expect them to learn on their own.

Algorithms require a solid database

Self-learning algorithms, however, belong more to the realm of myths. It is not enough to simply “feed algorithms with data” and expect them to learn on their own. Rather, they are trained or educated by model data. An interesting example here is the authentication method where we are asked to select all images showing mountains or bridges or cars from a selection of images.

The image selection (and also many other similar methods) – such as CAPTCHA (Completely Automated Public Turing test to tell Computers and Humans Apart) – is not about training artificial neural networks, but it is simply about “proving” that one is a human and not a machine. The aim here is to prevent automation from operating functions, such as the creation of forum entries or queries. Thus, it is about a kind of identification, but at the same time, of course, corresponding networks can be trained with such results.

So all these operations are also used to feed Google’s AI to recognize images, classify them and use what it has learned elsewhere.

In machine learning, a distinction is made between different types.

Types of machine learning

- Unsupervised learning

This involves the recognition of patterns in data. An algorithm receives input and creates a model based on similarities, for example. - Supervised learning

In this case, learning is done by training on the basis of data with already known outputs. For example, it is defined which rubric a content should be assigned to. The system then determines which terms occur most or not at all in the individual categories. New texts are now checked for these words and can thus be assigned to the corresponding category. - Reinforcement learning

In this type of learning, an algorithm responds to reward. Again, external feedback is indispensable and a computer must be trained, for example, by losing or winning while learning a game.

Today, advances in artificial intelligence are particularly evident in the processing of natural language. This is what so-called Natural Language Processing (NLP) is all about. This is used, among other things, in voice services such as Siri or Alexa.

In “deep learning,” an algorithm uses artificial neural networks to make decisions. This enables correlations to be recognized even in very large volumes of data.

How long has artificial intelligence been around?

Somehow, artificial intelligence still sounds a bit like science fiction, and people forget that this phenomenon has been around for a long time. Scientists have been pushing artificial intelligence further and further.

The birth of the term “artificial intelligence” can be traced to the summer of 1956 when U.S. logician, computer scientist and author John McCarthy organized a six-week conference on artificial intelligence at Dartmouth College in Hanover, New Hampshire.

This Dartmouth Conference is considered the kickoff of artificial intelligence research. McCarthy, along with American researcher Marvin Minsky, founded the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL), which remains a leader today.

Marvin Minsky claimed in 1970 that machines would be reading Shakespeare within a few years.

A still well-known milestone was in 1997 when a computer defeated the then-world chess champion Kasparov in a tournament. Equally notable were the events of 2005 and 2010 when the vehicle “Stanley” became the first fully autonomous land vehicle to negotiate a course through the desert southwest of Las Vegas and IBM’s computer “Watson” won on the show “Jeopardy” because it could analyze the questions posed faster and more accurately than its human competitors, respectively.

And, of course, there was the birth of “Siri” as part of the iPhone operating system in 2011. The voice recognition software assists with daily tasks and can, for example, make calendar entries or conduct an Internet search by voice command.

Exposure to AI

Probably some still think they haven’t had any exposure to AI. And of course they’re right. But how often we actually use AI in our everyday lives is certainly not always completely clear to us.

Digital Assistance

Of course, that’s certainly the first thing many people think of when they talk about artificial intelligence. Today, smartphones use AI to make the user experience as personal as possible. Voice assistants answer our questions, make suggestions, and help us organize our everyday tasks.

Search on the Internet

A process that each of us now carries out daily and almost certainly several times. In the meantime, almost unconsciously. “Do you know…..?” “Google it…”. That’s how it goes today. Completely normal.

Translations

Multilingualism is virtually unavoidable in today’s professional world. At least a knowledge of English is needed, because most companies are international. But even if you communicate in the other language in meetings or conferences, translation tools are often used for written communication or longer texts, at least for pre-translation. These also rely on artificial intelligence.

Shopping

Today, customers receive personalized recommendations online. Here, AI is used to make the most suitable offers possible on the basis of purchases already made or product searches. It is easy to imagine that AI is also of great importance for retail.

These are just a few examples of where we encounter AI in our daily lives.

Use of AI

On the whole, we can say that AI is gaining importance in almost all areas. Basically, AI is of interest to all industries where large amounts of data need to be processed.

Less commonly-known examples of AI include manufacturing; here, quality control can be supported by algorithms. In healthcare, for example, an AI program for emergency calls has been developed to diagnose cardiac arrests more quickly and accurately by using a digital assistant. AI is even being used in agriculture; robots can be used to weed, reducing the use of herbicides.

AI and the service desk

Artificial intelligence can also play a role for service management systems, such as OTRS. This makes sense, because these tools are primarily used to structure communication, automate recurring tasks and optimize processes.

In other words, professional, technological support for handling the concerns of internal and external customers optimally and efficiently. This is particularly important in times of increasingly decentralized teams. In the case of OTRS, the use of AI in a meaningful way has been explored for some time. It is clear that this could primarily involve automation and support for agents, for example, through machine classification and allocation of support requests.

This important role for AI in contemporary service management software is accomplished by analysis and intelligent enrichment of data and information.

AI can make a service desk's job easier.

AI can make the work of a service desk much easier. For example, the system can provide agents with information or entries from the knowledge database that match the text entered. Ideally, they can even receive all the important information about the user, such as SLAs or contract details.

AI can also be of great benefit to management, whose task is to maintain or optimize the efficiency and quality of the service desk, when it comes to analysis and reporting. For example, by using content to group tickets into headings, AI can make it possible to see for which topics a particularly large number of tickets or incidents have been recorded. Ideally, this should be independent of how incidents were described or categorized.

In almost every industry, ideas and possibilities are currently being developed to increase efficiency by automating processes. This frees up space for creativity and strategic tasks.

Is AI putting us out of work, and what will happen next?

This is a fear that some express, but let’s start by looking at the situation in a positive light. AI simplifies and automates workflows, relieving people of repetitive tasks. This frees up space for other tasks. It also enables faster decision-making thanks to a better database. This makes it easier for companies to respond to changing circumstances and adapt to new market conditions. In other words, AI increases the competitiveness of companies.

But yes, when robots or machines take over work, jobs could be eliminated as a result. However, the amount of work itself will not become less as a result, because new areas of responsibility will emerge. First and foremost, AI will help us to take over activities that are monotonous and thus will create more creative jobs.

In summary, AI cannot replace humans, because it is always dependent on human programming and input. Moreover, artificial intelligence is always “artificial,” i.e., constructed, and by definition will never be “real.” Until we as humans create “real intelligence” in non-human form, artificial intelligence will never be able to replace real intelligence.

Even if machines today already have analytical capabilities with which they can make thoroughly complicated decisions, the knowledge used for this is not always sufficient. Human intuition, which plays a major role in many decisions today, is missing.

Computers can drive a car or make an appointment or win a chess game. But they don’t know how that feels because they remain machines.

Categories

- About OTRS Group (21)

- Automation (3)

- Corporate Security (26)

- Customer Service (30)

- Developing a Corporate Culture (12)

- Digital Transformation (54)

- General (86)

- ITSM (36)

- Leadership (22)

- OTRS in Action (8)

- Processes (5)

- Using OTRS (15)